Tracking Brand Mentions in AI Chatbots and Beyond

Why AI Visibility Is the New Brand Moat in 2026

How to measure AI visibility for marketing campaigns is now one of the most urgent questions in modern marketing — and most brands don't have a good answer yet.

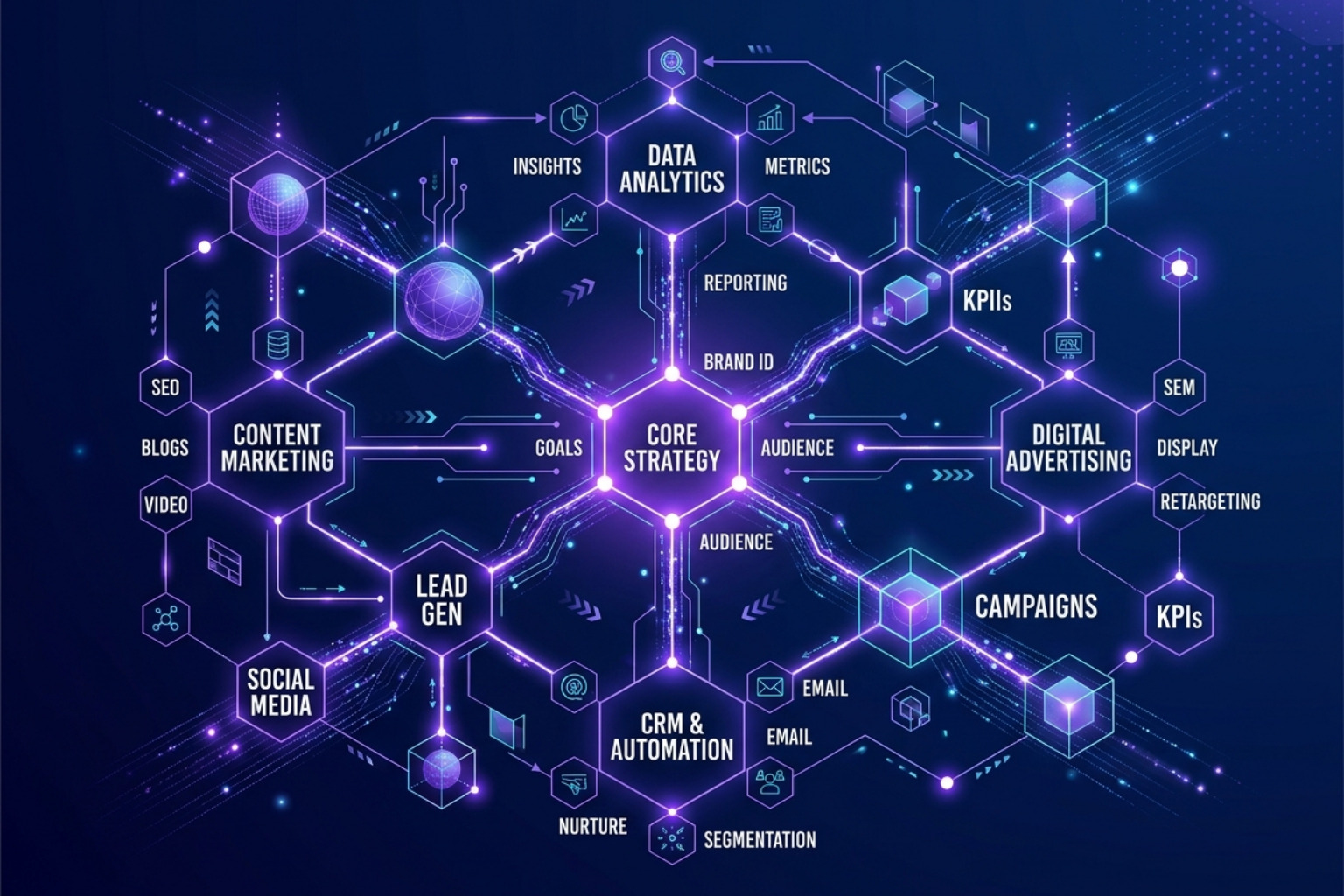

To measure AI visibility for your marketing campaigns, track these five core metrics:

- Brand mention rate — how often your brand appears across relevant AI-generated responses

- Share of voice (SOV) — your mentions vs. competitors' mentions for the same category queries

- Citation rate — how often AI platforms link to or reference your content as a source

- Sentiment score — whether your brand is framed positively, neutrally, or negatively in AI answers

- AI referral traffic — visits from platforms like ChatGPT, Perplexity, and Claude tracked in GA4

Over 60% of Google searches now surface an AI-generated answer. That means your brand can influence a purchase decision without ever receiving a click. Traditional analytics won't show you this. Google Search Console won't either. And if you're not measuring it, you're flying blind while competitors are not.

The stakes are real. Research shows that only 30% of brands appear consistently in consecutive AI answers — and just 1 in 5 sustain visibility across five runs of the same query. Meanwhile, the brands that do get cited convert at dramatically higher rates: one study found AI-referred visitors convert at 23 times the rate of traditional organic traffic.

This is the new dark funnel. And it's enormous.

I'm Florian Radke — brand strategist, fractional CMO, and founder of The Brand Algorithm — and after 25 years building brands at the frontier of technology, from viral DTC campaigns to AI-driven content engines for international brands, I've made how to measure AI visibility for marketing campaigns a central focus of my work in 2026. In this guide, I'll walk you through the tools, frameworks, and metrics that actually move the needle.

How to Measure AI Visibility for Marketing Campaigns: A 4-Step Framework

Measuring brand presence in the age of generative engines is fundamentally different from tracking keyword rankings. We are no longer dealing with static indexes; we are dealing with probability engines. If you ask ChatGPT a question ten times, you might get ten slightly different answers. To get a statistically valid picture, we need a rigorous framework that moves beyond manual "spot-checking."

We recommend a four-step process to operationalize your measurement:

- Prompt Engineering & Library Building: You cannot measure what you haven't defined. We must build a library of high-intent prompts—those "best of" or "how to" queries that lead directly to your product.

- Multi-Platform Monitoring: Visibility on ChatGPT does not guarantee visibility on Perplexity or Google AI Overviews. Each model has its own "consideration set" based on its training data and real-time web access.

- Statistical Sampling: Because AI responses vary, running a prompt once is meaningless. We need to run queries 60–100 times to establish a "Visibility Percentage."

- Operational Analysis: Turning raw mentions into ai content optimization strategies guide 2026 requires looking at sentiment, citation durability, and the quality of the surrounding context.

Establishing a Baseline for AI Visibility for Marketing Campaigns

Before you can optimize, you need to know where you stand. Establishing a baseline is about mapping your "brand footprint" across the LLM landscape. We start by categorizing our prompts into five distinct buckets:

- Direct Brand Queries: "Tell me about [Your Brand]."

- Category-Based Queries: "What are the best [Category] tools for small businesses?"

- Problem-Solution Queries: "How do I solve [Specific Pain Point]?"

- Comparison Queries: "[Your Brand] vs [Competitor]—which is better for [Use Case]?"

- Recommendation Queries: "I need a [Product Type] that does [Feature X]."

To get a reliable baseline, we use a prompt library of 20–30 queries and run them across multiple platforms. Research suggests that the chance of getting the exact same list of brands in the same order from an AI is less than 1 in 1,000. Therefore, we focus on Visibility Percentage—the frequency with which our brand appears in the consideration set across 100 runs. If we appear 80 times, our visibility is 80%. This is the "North Star" metric for best practices for increasing brand visibility in ai-generated search results.

Benchmarking Competitors to Improve AI Visibility for Marketing Campaigns

AI visibility is a zero-sum game. If an AI platform recommends three competitors and ignores you, you’ve lost the customer before they even reached a search results page. We measure Share of Voice (SOV) by calculating: (Your Mentions / Total Mentions in Category) x 100.

A "Market Leader" typically maintains an SOV of 30% or higher, while a "Challenger" sits between 15–25%. By benchmarking, we can identify "Competitor Gaps." If a competitor is cited 40% of the time for a specific problem-solution query and we are at 5%, we know exactly where our content authority is lacking.

We also look at Category Association. How strongly does the AI link our brand to specific keywords? If the AI consistently mentions us for "sustainable fashion" but never for "luxury apparel," we have a clear signal of how the algorithm perceives our brand positioning. For more on this, see Measure AI Visibility: 5 Metrics That Prove Impact | Brainlabs.

Metrics and Tools for Tracking Brand Mentions in AI Chatbots

To scale this measurement, we move beyond spreadsheets and into dedicated tracking environments. We need to monitor not just if we are mentioned, but how and why.

| Metric | What it Measures | Why it Matters in 2026 |

|---|---|---|

| Citation Rate | Frequency of links to your site | Direct evidence of authority and traffic potential. |

| Sentiment Score | Positive/Neutral/Negative framing | Protects brand equity from AI "hallucinations." |

| Source Diversity | Percentage of mentions from non-owned sites | 85% of brand mentions often come from third-party sites like Reddit or Wikipedia. |

| Position Ranking | Where you appear in a list (1st, 2nd, etc.) | Higher positions correlate with higher user trust. |

| Response Consistency | How often the AI gives the same answer | Measures the stability of your brand's presence. |

Tracking these metrics allows us to treat AI platforms as a measurable marketing channel. By integrating these insights with Tag/Marketing Analytics, we can see the full journey from AI mention to conversion.

Top Platforms for AI Visibility Monitoring

In 2026, the landscape is fragmented. Marketers must prioritize monitoring based on where their specific audience lives:

- ChatGPT (OpenAI): Still the volume leader. It heavily favors high-authority sources like Wikipedia and Reddit.

- Perplexity: The "searcher's AI." It provides explicit citations, making it the easiest to track for referral traffic.

- Google AI Overviews: Critical for traditional SEOs. It aggregates web content and is highly sensitive to schema and structured data.

- Claude (Anthropic): Known for nuanced, long-form reasoning. It often provides more detailed brand comparisons.

- Gemini: Integrated into the Google ecosystem, influencing everything from Docs to Maps.

- Grok (xAI): Real-time data focus, making it essential for tracking trending brand sentiment and news.

The Best Tools for Automating Measurement

As the "dark funnel" expands, several tools have emerged to automate the process of how to measure AI visibility for marketing campaigns.

- Amplitude: Excellent for connecting AI mentions to behavioral analytics. It helps you see what users do after they find you via an AI recommendation.

- Profound: A favorite for agencies. It offers "pitch environments" and "client workspaces," allowing teams to audit a prospect's AI visibility before a sales call. It tracks all 10 major AI answer engines.

- AirOps: Focuses on automation. It allows you to run thousands of prompts daily across different models to identify patterns and shifts in real-time.

- Semrush (AI SEO Toolkit): Integrates AI tracking into the standard SEO workflow, making it easier for teams to transition from keyword tracking to generative engine optimization.

- Otterly.AI: Provides specialized brand monitoring that alerts you when your sentiment shifts or when a competitor starts stealing your share of voice in specific query clusters.

Connecting AI Visibility to Revenue and Pipeline

The ultimate goal of measuring visibility is proving ROI. While AI platforms are often "zero-click," they act as powerful pre-qualifiers. When a user asks an AI for a recommendation and then searches for your brand specifically, that is a direct result of AI visibility.

To connect visibility to the bottom line, we use a three-layer attribution model:

- Direct Attribution: Tracking AI referral traffic in GA4. While the volume may be lower than traditional search, the conversion rate is often 2x higher because the AI has already "sold" the user on your solution.

- Partial Attribution (Proxy Metrics): Measuring Brand Search Lift. We look for correlations between a spike in AI mentions and an increase in branded searches or direct site visits.

- Influenced Revenue: Using post-purchase surveys that ask, "How did you first hear about us?" Many users will now answer, "ChatGPT recommended you."

By setting up GA4 custom segments to filter traffic from sources like openai.com or perplexity.ai, we can isolate this high-value cohort. For a deeper dive into these calculations, check out The Complete Guide to Measuring AI Visibility ROI | AdsX and our guide on how to measure brand equity.

Frequently Asked Questions about AI Visibility

How often should I monitor AI visibility for my campaigns?

We recommend weekly tracking for core KPIs and monthly deep-dives for competitive benchmarking. Because LLMs update their models and web-crawling patterns frequently, a 7-day refresh cycle is necessary to catch "citation decay." If you see your visibility drop suddenly, it’s usually a signal that your content needs a refresh—pages updated within the last 12 months are twice as likely to retain AI citations.

What is a good AI Share of Voice benchmark?

Benchmarks vary by industry, but generally, a 30% Share of Voice indicates market leadership. If you are a challenger brand, aim for 15–25%. If your SOV is below 10%, your brand is effectively invisible in the AI-driven discovery phase. You should also aim for a citation frequency of at least 20+ mentions per week in high-intent category queries.

Can I track AI visibility without expensive tools?

Yes. You can start with manual spreadsheets and a prompt library. Run your top 20 queries across ChatGPT and Perplexity once a week and document the results. Tools like the HubSpot AEO Grader can also provide quick, free snapshots of your performance. However, as you scale, automation becomes necessary to handle the statistical variability of AI responses.

Conclusion: Turning Visibility into a Defensible Moat

In 2026, visibility is no longer about occupying a slot on page one of Google; it is about being the "trusted recommendation" in an AI's internal model. By mastering how to measure AI visibility for marketing campaigns, you aren't just collecting data—you are building a strategic force multiplier for your brand.

At The Brand Algorithm, we believe that as AI commoditizes the how of marketing, the who (your brand) becomes your only defensible moat. Measurement is the first step in defending that moat. Use these insights to refine your Master your AI strategy for the C-Suite and ensure your brand remains the signal in an increasingly noisy, algorithmic world.